Cybersecurity in the Age of Gen-AI: Why Every Developer Must Care About Security

Introduction

Generative AI has moved faster than any primary technology wave in recent memory. In just a few years, tools that once felt experimental have become embedded in daily development workflows. Developers now rely on AI assistants to write code, debug logic, generate documentation, design APIs, and even make architectural suggestions. This shift has accelerated delivery cycles and lowered barriers to innovation across industries.

At the same time, this rapid adoption has quietly reshaped the cybersecurity landscape. Traditional security models assumed human-written code, predictable system behavior, and clearly defined boundaries between development and operations. Generative AI challenges all three assumptions. AI-generated code can introduce subtle vulnerabilities. Model-driven systems expand attack surfaces. Development teams move faster than governance structures can adapt.

Security cannot remain the sole responsibility of specialized teams. In the age of Gen-AI, developers shape security outcomes every day through the tools they choose, the prompts they write, and the code they deploy. A single insecure integration or poorly governed AI workflow can expose sensitive data, intellectual property, or customer trust.

This blog explores how generative AI is changing cybersecurity fundamentals, why developers now sit at the center of risk and resilience, and how organizations can embed secure practices into AI-enabled development without slowing innovation. It also outlines practical responsibilities developers must adopt and the organizational shifts required to support them.

How Generative AI Is Reshaping the Security Landscape

Generative AI does not simply accelerate existing development practices. It fundamentally alters how software is conceptualized, written, reviewed, and deployed. This shift introduces security implications that traditional development models were never designed to handle.

From Human-Centric Development to AI-Augmented Creation

Before generative AI, most security assumptions rested on predictable human behavior. Developers wrote code deliberately, reused familiar patterns, and relied on established libraries. Security reviews focused on logic errors, configuration mistakes, and known vulnerability classes.

With AI-assisted development:

- Code is generated faster than teams can thoroughly evaluate it, increasing the likelihood of subtle vulnerabilities slipping through reviews.

- Developers may implement solutions they do not fully understand because the output appears syntactically correct and confident.

- Patterns learned from public repositories may reflect insecure practices that once worked but no longer meet modern security expectations.

This shift does not imply poor judgment on the part of developers. It reflects how AI compresses decision-making time and changes the relationship between intent and implementation.

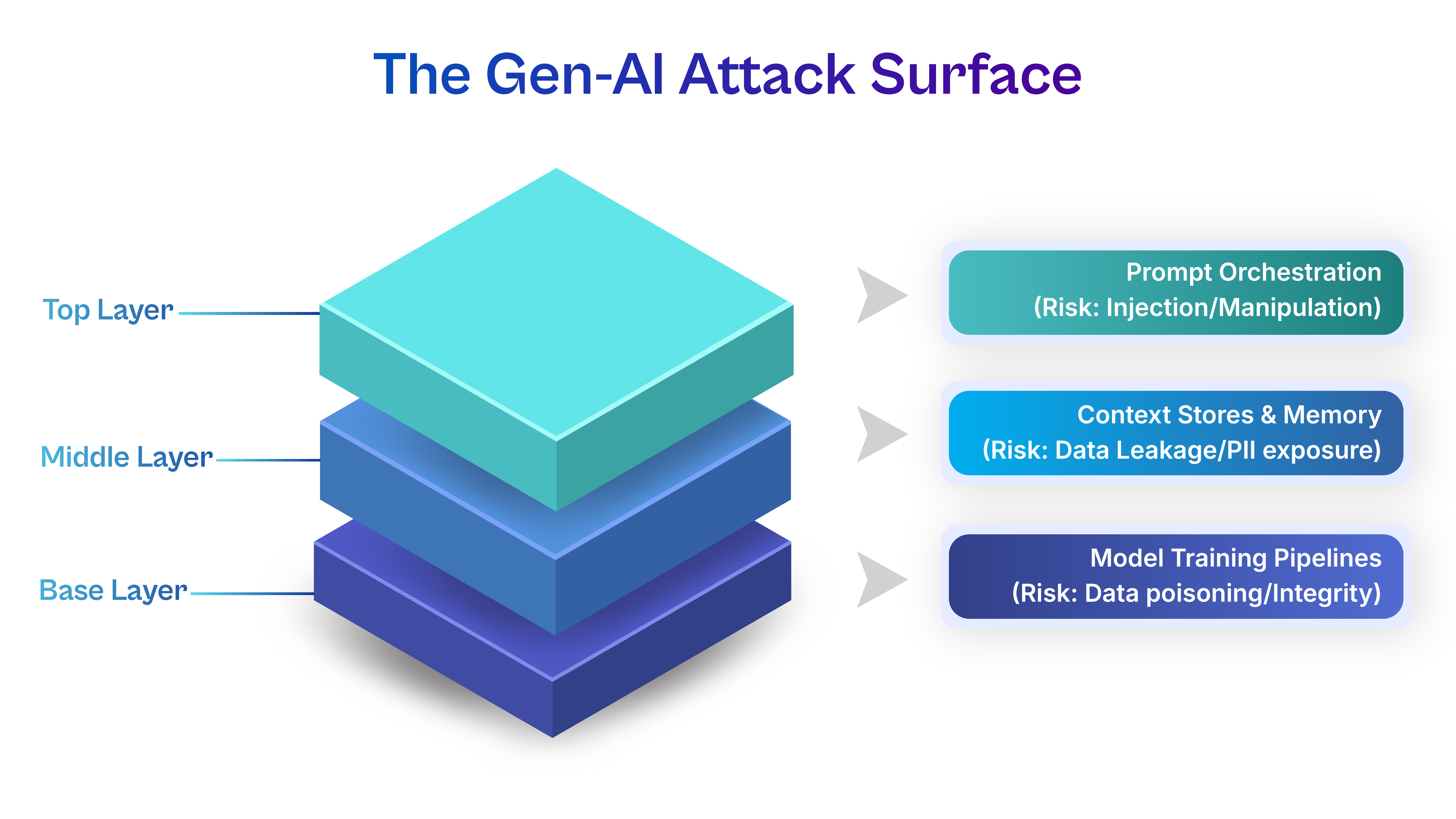

AI Introduces New Layers That Security Must Account For

Modern AI-enabled applications rely on interconnected components that expand the attack surface:

- Language models that interpret natural language rather than strict syntax

- Prompt orchestration layers that guide behavior

- APIs that connect models to internal systems

- Context stores that retain conversational memory

Each layer introduces opportunities for misconfiguration, misuse, or unintended exposure. Security teams must now consider risks that emerge from how systems reason, not just how they execute instructions.

Speed Without Structure Creates Invisible Risk

Generative AI rewards velocity. However, speed without guardrails allows insecure patterns to spread quickly across services. A flawed AI-generated function reused across multiple applications silently multiplies risk. Without deliberate checkpoints, teams often discover issues only after deployment, when remediation becomes costly and disruptive.

Why Developers Now Sit at the Center of AI Security

Security has always depended on developer decisions. Generative AI makes that dependency unavoidable.

Developers Control AI Inputs and Outputs

Every prompt influences what an AI model generates. Prompts can unintentionally expose proprietary logic, sensitive data, or system architecture details. Output handling determines whether AI responses get sanitized, validated, or blindly executed.

These choices rarely pass through centralized security teams, yet they carry significant risk.

Secure Design Starts in the IDE

When developers:

- Choose libraries suggested by AI

- Accept default configurations

- Reuse generated logic across services

They define the application’s security posture before deployment. Fixing flaws later costs more and disrupts operations.

According to Gartner, embedding security earlier in the development process reduces remediation costs and improves long-term system resilience, especially in AI-driven environments where complexity grows rapidly (Gartner, 2024).

AI Blurs Traditional Ownership Boundaries

In traditional models, security teams reviewed code written by humans. With AI assistance, authorship becomes shared between humans and machines. This complicates accountability unless organizations clearly define developer responsibilities for AI usage.

Developers must treat AI as a powerful collaborator, not an authority.

New Threat Vectors Introduced by Generative AI

Generative AI introduces threats that feel unfamiliar because they operate at the logic and language layer rather than traditional network or code boundaries.

Prompt Injection and Behavioral Manipulation

Prompt injection attacks exploit how AI systems interpret instructions. Unlike classic injection attacks that target databases, these attacks manipulate meaning and intent.

Developers face risk when:

- User input merges directly with system prompts without separation

- AI systems receive authority to perform actions based on natural language interpretation

- Models expose internal instructions or decision logic through poorly constrained prompts

A manipulated prompt can cause an AI system to bypass safeguards, reveal sensitive information, or perform unintended actions. Developers must recognize that prompts behave like executable logic and require the same discipline as code.

Data Leakage Through Context and Memory

Many AI tools retain conversational context to improve relevance. This creates risk when developers:

- Paste proprietary source code into external AI tools

- Share logs containing credentials or customer data

- Assume internal AI systems automatically enforce data boundaries

Once sensitive data enters an AI context, organizations may lose visibility and control over how that data persists, gets reused, or influences future outputs. This risk grows when teams lack clarity around retention policies and access controls.

Model Integrity and Training Risks

Organizations that fine-tune models using internal data must treat training pipelines as critical infrastructure.

Risks emerge when:

- Training data lacks validation or provenance checks

- Feedback loops allow biased or manipulated inputs to influence model behavior

- Developers assume model outputs remain stable over time

Small distortions in training data can accumulate into systemic weaknesses, affecting security logic, recommendations, or automated decisions.

The Shift From Reactive Security to Secure-by-Design Development

Generative AI accelerates the consequences of design decisions. Security must move upstream to remain effective.

Rethinking the Development Lifecycle

In traditional workflows, security appeared late in the cycle. AI compresses this cycle, leaving little room for reactive fixes.

A secure AI-aware lifecycle requires attention at each stage:

- Ideation: Define what AI should and should not handle

- Prompt design: Constrain behavior and isolate inputs

- Code generation: Validate dependencies and logic

- Integration: Limit permissions and scope

- Monitoring: Observe behavior drift and anomalies

Security becomes continuous rather than episodic.

Secure Prompt Engineering as a Core Skill

Prompts influence AI behavior as directly as code influences application behavior.

Secure prompts should:

- Clearly separate system instructions from user-provided input

- Avoid embedding sensitive information or internal rules

- Explicitly constrain actions the model may take

- Include validation logic for outputs before execution

Treating prompts casually increases the likelihood of misuse, even without malicious intent.

Monitoring AI Behavior Over Time

AI systems evolve based on inputs and usage patterns. Developers must collaborate with security teams to:

- Log prompt usage responsibly

- Detect unusual response patterns

- Identify degradation in output reliability

This visibility transforms AI from an opaque dependency into an accountable system component.

Why “Security Is Not My Job” No Longer Works

For many years, software development operated on an implicit division of responsibility. Developers focused on functionality and delivery, while security teams handled risk assessment, controls, and remediation later in the lifecycle. That separation was imperfect but manageable in slower, more predictable environments. Generative AI breaks that model completely.

AI-assisted development collapses timelines, increases abstraction, and distributes decision-making across tools that operate beyond traditional boundaries. Developers now influence security outcomes at the moment code is generated, prompts are written, and integrations are selected. Treating security as a downstream concern in this context creates blind spots that no review process can fully correct later. In the age of Gen-AI, security shifts from a specialized function to a shared responsibility embedded in everyday development work.

Shared Responsibility Becomes Mandatory

Cloud adoption already pushed security responsibilities closer to development teams by decentralizing infrastructure and automating deployment. Generative AI completes that shift by placing powerful decision-making tools directly in developers’ hands.

Developers now:

- Choose AI vendors and tools that differ widely in how they handle data retention, training, access controls, and transparency, directly shaping the organization’s risk exposure before security teams even engage.

- Integrate APIs and plugins with broad permissions that allow AI systems to access internal services, databases, or workflows, increasing the impact of misconfigurations or misuse.

- Control what data enters and exits AI systems through prompts, logs, and contextual inputs, often determining whether sensitive information remains protected or becomes inadvertently exposed.

Each of these decisions carries security consequences that cannot be fully mitigated after deployment. Responsibility shifts not because organizations want it to, but because AI-driven workflows make it unavoidable.

Regulatory and Compliance Pressure

As AI systems influence critical business processes, regulators increasingly focus on how organizations design, deploy, and govern these technologies. Scrutiny intensifies where AI intersects with sensitive data, automated decision-making, and customer-facing outcomes.

Developers play a critical role in this environment because:

- Development practices determine whether systems align with secure AI lifecycle expectations outlined in emerging frameworks.

- Poorly governed AI integrations can introduce compliance risks even when intent remains benign.

- Security controls implemented too late may fail to satisfy regulatory expectations for accountability and transparency.

Developers who understand these expectations help organizations translate policy into practice. Their awareness reduces compliance friction and strengthens resilience as regulations continue to evolve.

Trust as a Competitive Advantage

In AI-enabled products, trust becomes as important as functionality. Customers and partners expect systems that not only perform well but also behave predictably, protect data, and respect boundaries.

Developers influence trust through:

- Thoughtful handling of user data within AI workflows

- Careful validation of AI-generated outputs before they affect real users

- Responsible integration choices that prioritize reliability over convenience

Security failures erode confidence faster than missing features or delayed releases. In contrast, secure systems build reputational strength over time. When developers recognize their role in shaping trust, security becomes an internal requirement, embedded in product quality and a competitive differentiator.

Practical Security Responsibilities Every Developer Must Adopt

Generative AI has reshaped what it means to build software responsibly. Developers no longer work only with deterministic code and predictable inputs. They now collaborate with systems that generate logic, interpret language, and dynamically influence decisions. In this environment, security cannot remain a specialized concern addressed after features are complete. It must become part of how developers think, design, and validate their work from the very beginning.

Developers do not need to transform into security specialists to meet this expectation. Instead, they must internalize security awareness as a natural extension of professional judgment. Small, consistent choices made during development often determine whether AI-enabled systems remain resilient or become vulnerable. When developers understand how their everyday actions influence risk, security shifts from an obligation to a habit.

Day-to-Day Development Responsibilities

Developers should consistently:

- Review AI-generated code with the same skepticism applied to human-written code, recognizing that confident-looking output may still contain outdated practices, insecure defaults, or hidden assumptions that do not align with modern security standards.

- Validate authentication, authorization, and data-handling logic explicitly rather than assuming correctness, especially when AI tools generate boilerplate or integration logic that interacts with sensitive systems.

- Confirm that suggested libraries and frameworks meet organizational security and maintenance requirements, including active support, patch history, and compatibility with existing controls.

- Avoid copying AI outputs directly into production without contextual understanding, ensuring that generated solutions align with architectural intent and real-world usage conditions.

These practices reduce the risk of inadvertently introducing vulnerabilities while preserving the productivity benefits of AI.

Design-Time Decision Awareness

Security risks often originate from early design choices.

Developers influence risk when they:

- Decide which AI tools and models to integrate, shaping data exposure, dependency risk, and long-term maintainability.

- Define the level of autonomy AI systems have, including whether their outputs trigger actions automatically or require human validation.

- Choose where AI-generated outputs flow within applications, determining whether sensitive operations remain controlled or become indirectly exposed.

Early design decisions determine whether security remains manageable or becomes fragile as systems scale and evolve.

Responsible Tool Usage

Developers must understand the boundaries of the AI tools they use:

- What data the tool stores or retains, including whether prompts or outputs persist beyond a session and whether they contribute to future model behavior.

- Who has access to model outputs, particularly in shared environments where visibility may extend beyond the immediate development team?

- Whether usage complies with organizational policies, contractual obligations, and regulatory expectations tied to data protection and intellectual property.

Responsible usage ensures that productivity gains do not come at the cost of trust, confidentiality, or compliance.

By embedding security awareness into daily development habits and design decisions, developers protect not only the applications they build but also the organizations and users who rely on them. In an AI-driven landscape, responsible development becomes the foundation of sustainable innovation rather than a constraint on progress.

Organizational Support Developers Need to Succeed

While developers play a central role in securing AI-enabled systems, they cannot carry that responsibility in isolation. Generative AI increases complexity, compresses timelines, and introduces unfamiliar risks that extend beyond individual decision-making. Without organizational structure, even well-intentioned developers may take shortcuts simply to keep pace with delivery expectations.

Security in the age of Gen-AI succeeds when organizations treat it as an enablement function rather than a control mechanism. Clear policies, supportive tooling, and cross-functional alignment help developers make secure choices without slowing innovation. When organizations fail to provide this foundation, security becomes inconsistent, reactive, and dependent on individual vigilance rather than systemic resilience.

Clear and Practical AI Usage Policies

AI policies must move beyond generic compliance language and address real development scenarios. Effective policies act as decision frameworks rather than restrictive rulebooks.

- Clearly define which AI tools and platforms developers may use, including distinctions between internal models, third-party services, and experimental tools, so teams understand where sensitive work belongs and where experimentation is acceptable.

- Specify what categories of data developers may and may not share with AI systems, including source code, logs, customer information, and internal documentation, reducing ambiguity during day-to-day problem solving.

- Explain the reasoning behind restrictions to build trust and adoption, helping developers understand how data exposure, retention, and model behavior influence organizational risk.

- Establish guidance for prompt design, output validation, and logging expectations, ensuring that AI usage aligns with broader security and compliance goals.

- Commit to regular policy updates as tools evolve, signaling that governance adapts alongside innovation rather than lagging behind it.

Security Tooling That Fits Developer Workflows

Developers adopt security practices more consistently when tools integrate seamlessly into their existing environments. Security that disrupts productivity often gets bypassed, regardless of intent.

- Provide AI-aware static analysis and dependency-scanning tools that recognize patterns common to AI-generated code, enabling teams to identify risks early without manual overhead.

- Integrate security checks directly into IDEs and CI/CD pipelines so developers receive feedback while they work, not after deployment decisions are already made.

- Offer centralized prompt management or monitoring capabilities for production AI systems, enabling visibility into how models receive inputs and generate outputs without exposing sensitive content unnecessarily.

- Ensure access controls and permissions around AI services follow least-privilege principles, reducing the blast radius if a tool or integration becomes compromised.

- Invest in observability tools that track AI behavior over time, helping teams identify drift, anomalies, or misuse before issues escalate.

Cross-Functional Collaboration as a Default Operating Model

Generative AI dissolves traditional boundaries between development, security, legal, and data teams. Organizations must adjust collaboration models accordingly.

- Encourage early involvement of security teams during AI feature design so risks are addressed before architectural decisions solidify.

- Enable legal and compliance teams to understand technical workflows, ensuring regulatory expectations translate into practical implementation guidance.

- Create shared accountability for AI risk management across functions rather than isolating responsibility within a single team.

- Foster open communication channels where developers can raise concerns about AI behavior without fear of slowing progress or attracting blame.

- Align incentives so teams measure success not only by delivery speed but also by system reliability, trust, and long-term sustainability.

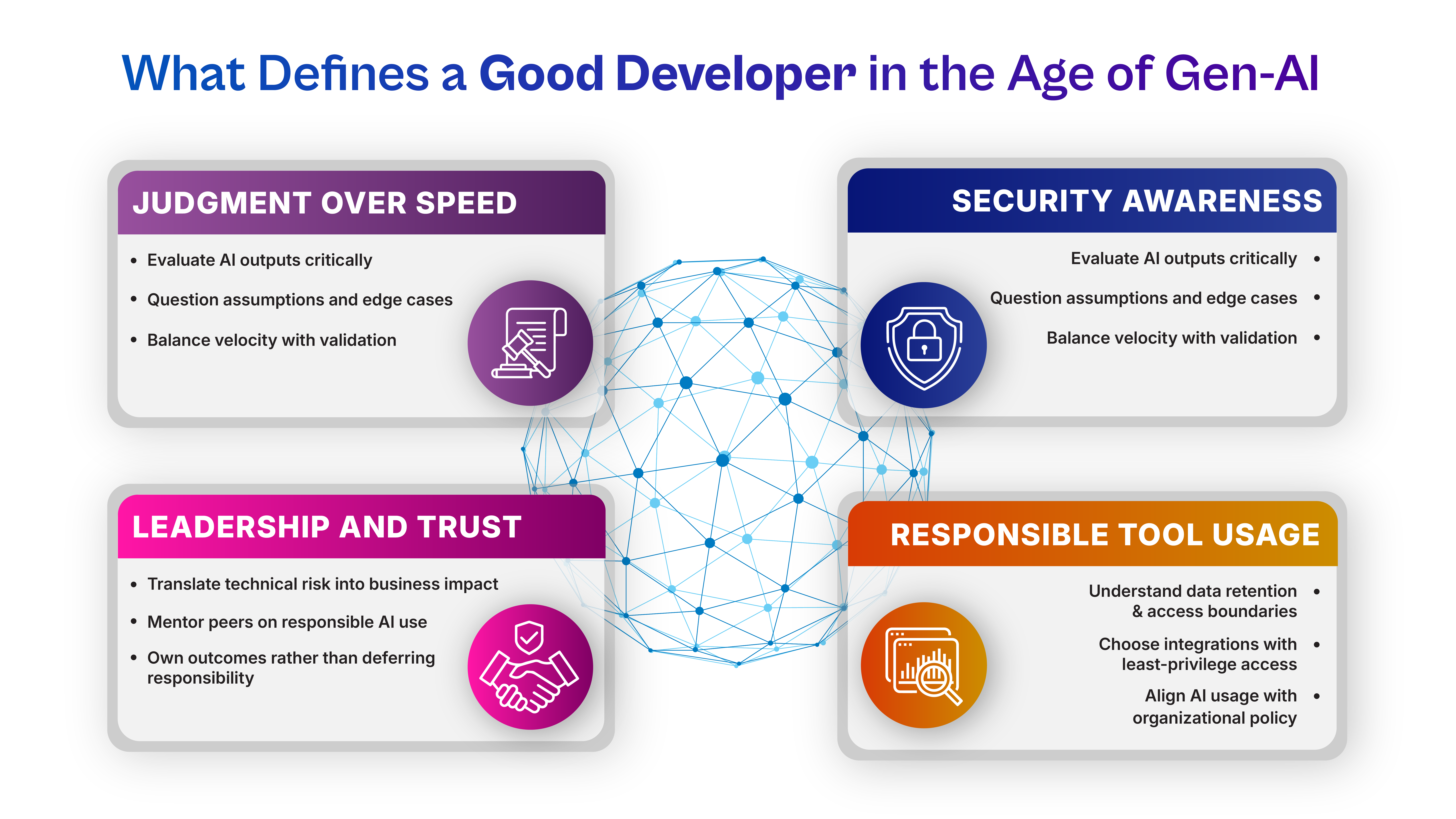

How Gen-AI Changes the Definition of a “Good Developer”

Generative AI challenges long-held assumptions about what it means to be a skilled developer. As tools automate syntax, structure, and repetitive tasks, technical execution alone no longer differentiates excellence. Instead, judgment, context awareness, and responsibility define impact.

In this new environment, developers act as stewards of systems that reason, adapt, and influence outcomes beyond deterministic logic. The quality of their decisions shapes not only functionality but also trust, safety, and ethical alignment. Security awareness becomes a defining attribute of professional maturity rather than a niche specialization.

Judgment Over Syntax and Speed

AI accelerates coding, but it does not replace human reasoning. Strong developers demonstrate value through discernment rather than volume.

- Evaluate AI-generated suggestions critically, questioning assumptions, edge cases, and security implications rather than accepting outputs at face value.

- Understand how and why a solution works before integrating it, ensuring maintainability and resilience under real-world conditions.

- Recognize when automation introduces unnecessary risk and choose simpler, more transparent approaches when appropriate.

- Balance delivery speed with thoughtful validation, resisting pressure to ship quickly at the expense of long-term stability.

- Treat AI as an assistant that augments decision-making rather than an authority that dictates outcomes.

Security Awareness as a Marker of Leadership

As AI systems influence broader business processes, security-conscious developers naturally emerge as leaders within teams.

- Anticipate how features could be misused or abused, not just how they function under ideal conditions.

- Raise security considerations early in discussions, shaping designs before risk becomes embedded.

- Mentor peers on responsible AI usage, spreading awareness organically across teams.

- Communicate effectively with security and non-technical stakeholders, translating technical choices into business impact.

- Demonstrate accountability by owning the consequences of design decisions rather than deferring responsibility.

Long-Term Career Relevance in an AI-Driven World

Tools, frameworks, and languages evolve quickly. Foundational judgment and ethical awareness endure.

- Developers who understand risk, governance, and system behavior adapt more easily to new technologies and roles.

- Security awareness enhances credibility with leadership, clients, and partners who increasingly evaluate teams based on trustworthiness.

- Professionals who think holistically about systems position themselves for senior technical and architectural roles.

- Ethical and security-conscious decision-making aligns personal growth with organizational resilience.

- In a landscape where automation handles execution, human judgment becomes the most valuable differentiator.

Looking Ahead: Security as a Design Skill

Generative AI will continue to evolve. Models will grow more capable, integrations will become more complex, and expectations will be higher. Security will not disappear into automation. It will demand better judgment at every layer.

Developers who embrace security as part of their craft will shape systems that scale responsibly. Organizations that support this shift will innovate without sacrificing trust.

Conclusion

Cybersecurity in the age of generative AI no longer lives at the edges of development. It lives inside prompts, code suggestions, integrations, and architectural decisions made every day. Developers influence security outcomes more directly than ever before, whether they recognize it or not.

As AI accelerates software creation, it amplifies both strengths and weaknesses. Treating AI as a neutral tool ignores the risks embedded in its design and usage. Treating security as someone else’s problem creates blind spots that attackers exploit quickly.

The path forward does not require slowing innovation. It requires redefining responsibility. When developers understand how generative AI reshapes risk and when organizations equip them with the proper guardrails, security becomes a shared advantage rather than a constraint. In that balance, businesses protect not only their systems but also the trust that sustains long-term growth.

Build AI Innovation on a Secure Foundation

Generative AI can accelerate development, but without the right security strategy, it can also expand risk. Organizations need development teams, governance frameworks, and technology practices designed for an AI-driven future.

Cogent Infotech helps enterprises implement secure, scalable AI development environments that balance innovation with resilience.

Connect with Cogent Infotech to strengthen your AI security strategy.

%402x.svg)